Established trust in AI systems is an essential element of network ready societies. Yet, measuring trust can be an unexpectedly challenging task requiring an understanding of epistemological and psychological elements at the base of the construct of trust, driving its development in the individual.

When analysing organizational AI readiness, trust is a prerequisite for the optimal societal transition towards the widespread adoption of the technology. In the context of AI, if trust cannot be established, its implementation and usage cannot generate the results for the very reason that it was developed. Consequently, a network that distrusts AI is necessarily resistant to its adoption and to the benefits it may entail. As illustrated by empirical research, trust is an important predictor of the willingness to adopt a range of AI systems, from product recommendation agents and AI-enabled banking to autonomous vehicles (AVs).

Importantly, and perhaps unsurprisingly, the lack of trust in AI appears to be at its highest in mission-critical systems when the technology is responsible for personal safety. For instance, a 2017 study identified that while 70% of Canadians trust AI for the scheduling of appointments, only 39% feel comfortable with AI piloting autonomous vehicles. While trust has been shown to be important for the adoption of a range of technologies, AI creates an array of qualitatively different trust challenges compared to more traditional information technologies. In response, governmental bodies like the European Union have made building trust in human-centric AI the benchmark for the development of guidelines for future AI technologies. Trustworthy AI places the interests of human beings at its centre, and thus should not only abide by legal standards, but also observe ethical principles and ensure that their implementations avoid unintended harm.

Nonetheless, the potential risks of AI persist, and because of this 56% of businesses have scaled down their AI implementation just in the past year. For instance, in a recent report released by Pega, the large majority of clients in the banking sector were shown to prefer interacting and being aided by human operators compared to AI systems, deemed unable to make unbiased decisions and incapable of morality or empathy. The findings from this report highlight baseline trends in the distrust of AI, first of which is the more than concrete fear of ethnic and gender discrimination by AI-driven decisional alghorithms.

In recent years, many cases have been uncovered of the discriminatory outcome of AI systems on already marginalized populations, collectively adding to the infamous perception of an untrustworthy AI. For instance, AI systems have been often involved in housing discrimination cases, such as in tenant selection and mortgage qualifications, as well as recruitment and financial. An important scenario is algorithmic hiring decisions, in which AI systems are used to filter in and out job applicants in initial screening steps. Compared to the traditional hiring process, frequently characterized by multiple forms of prejudice, AI-driven recruitment has often been employed as a valuable cost-effective strategy capable of minimizing human bias. Yet, bias from the traditional hiring process is still coded in the data used to train those systems, often resulting in denied job opportunities because applicant screening algorithms classify them ineligible or unworthy.

In order to prevent this phenomenon, there is a pressing need to understand what drives and enables trust, as evidenced by many recent calls from policymakers for more research on the matter. In fact, like most research findings and recommendations, research on AI systems is seen by the general public as inherently not transparent or explainable, thus adding to the image of technology as an indecipherable black box. However, more research on the matter could illuminate dark areas of the field and thus lead to the development of users’ cognitive and emotional trust. Companies such as Google, Airbnb and Twitter already provide users with transparency reports on government policies affecting privacy, security and access to information. Thus, a similar approach applied to AI systems could potentially aid in establishing a better understanding of how algorithmic decisions are made.

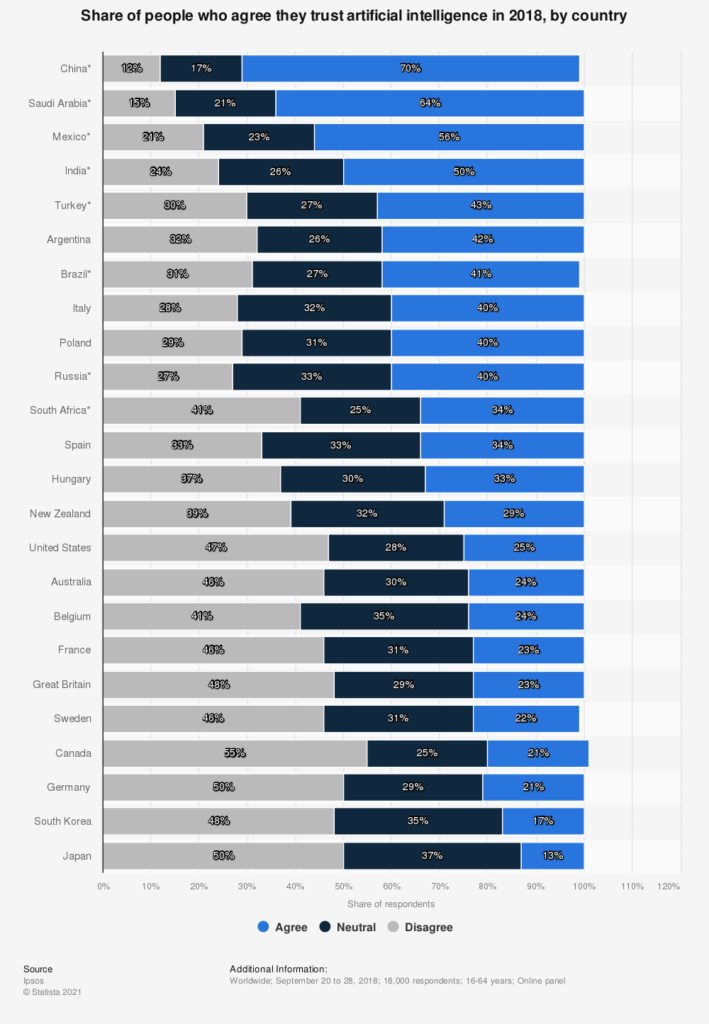

The implications of this hypothesis would be for a nation to display a higher level of trust in AI as technology is more widely researched and discussed. Interestingly however, results from Portulans Institute’s 2021 Network Readiness Index data only partially reflect this supposition. In fact, China ranks first as both the nation with the highest number of AI-related publications, as well as the nation displaying the highest level of trust in AI attributed by its population. Similarly, India ranks third and fourth respectively in the two categories reported above. However, a discrepancy between the two graphs can be observed for Japan, South Korea, Germany, Canada and the United Kingdom, all showing the highest levels of distrust in AI while being responsible for some of the largest amounts of publications on machine intelligence.

A similar discrepancy can also be observed in the number of publications that specifically address the domain of AI trust, found not to reflect the level of trustworthiness attributed to AI by a specific nation. Results of systematic research conducted on all publications of AI and trust in fact show the United States as the undisputed leader in the field with a total of 40.71 publications, followed by the United Kingdom (16.22), China (7.64) and Germany (5.58). However, the USA, the UK and Germany also show the highest levels of AI distrust (ranking 15th, 19th and 22nd respectively).

The observed divergence from the initial hypothesis could be attributed to a series of factors: first, is the three year gap between the survey conducted by Statista and the results from the NRI. Considering the fast-paced development of AI since 2018, it is quite possible that the public sentiment on AI may have changed. While the literature on trust building in AI over time is scarce, more information is present on human trust and technology overall, a relationship shaped by a plethora of variables among which, the ongoing Covid-19 pandemic, has exacerbated the already present fears relative to technology, in particular tech-related job loss and reluctance to share personal data. According to Frey and Osborne (2013), 47% of U.S. occupation is at risk of being fully or partially supplanted by the incremental implementation of artificial intelligence. More specifically, sectors that are more likely to be almost entirely digitalised are those requiring lower levels of education.

Second, the element of visibility and engagement with the research being published deserves particular consideration. In fact, the extensive number of publications does not necessarily mirror the overall level of discussion of a specific point of analysis. This could in fact represent another level of discussion for future indices of readiness accompanied by a broader analysis of the importance of knowledge sharing at the national and international level.

Lastly, what is perhaps at the core of the present discrepancy is the difficulty in defining and, most importantly, measuring trust. In fact, even a common definition of trust, whether this is a conscious or unconscious decision made by any single individual, poses substantial difficulties to its measurement. On one side of the debate, some scholars believe that trust in AI is merely an illusory choice and not representative of the ability of any individual distrusting or even fearing AI to truly reject it. In fact, contrary to more distinct forms of technology, AI cannot be easily discarded as a singular object; rather, AI is embedded within digital technologies, or even more insidiously, within the digital economy. Further, if AI develops at the current pace, it will soon be a consistent element in all forms of technology, thus rendering null any possibility to AI. According to this view, individuals are not proper users of AI, but rather they are subject to it).

Similarly, the 2021 Network Readiness Index (NRI) echoes this conceptualization of trust as a more unconscious phenomenon rather than a conscious choice made by the general public. More specifically, the NRI identifies trust as one of the three sub-pillars of governance. In this context, governance is conceptualized as the supporting infrastructure at the base of a safe and secure network for its users. In turn, the facilitation of network secure activities occurs at three levels, among which, trust is defined as the overall feeling of safety individuals and firms share in the context of the network economy and reflected by an environment conducive to trust and the trusting behavior of the population.

As such, the NRI 2021 calculates trust as a measure of secure internet servers, level of cybersecurity commitments made by individual countries, and the uses of the Internet as a buying platform, factors that are generally outside of the individual sentiment of trust but more indicative of the overall digital readiness of the network as a whole.

— Claudia Fini

Claudia Fini is an Associate Researcher at the UNESCO Chair in Bioethics and Human Rights based in Rome, Italy, where she has analysed the ethical, legal and public policy challenges of artificial intelligence with a focus on human rights and sustainable development goals. She is passionate about trauma-informed policies and practices that will make the transition to a digital society safer and more effective for all populations. Claudia holds a BSc in Neuroscience from King’s College London and an MPhil in Criminology from the University of Cambridge.